Who Searches Anymore? Why AI & Search Are the Best of Frenemies

In the few short years of AI’s exponential growth, some have been quick to announce the demise of traditional search. But have we been too quick to write the epitaph for search engines?

The real question isn’t whether generative AI will kill search or whether search engines will constrain AI. It isn’t even a battle between search and AI, Google vs ChatGPT.

It’s about whether we can build a future of web search where we can harness the benefits of both AI and traditional search to create a truly transformational knowledge engine.

Key takeaways

- AI is changing how we access information, but that doesn’t mean search is dead. While generative AI tools like ChatGPT and Perplexity are becoming more popular, most people still rely on traditional search engines and may even use both tools in tandem.

- AI chatbots struggle with ambiguous queries, context collapse, hallucinations, and citing reliable sources, limiting trust and traceability. Search promotes diversity and user agency while AI risks homogenisation, obscuring debate and amplifying bias.

- Traditional search infrastructure remains the backbone of AI. Language learning models (LLMs) depend on indexed web data from search engines. Without a strong, transparent search infrastructure, AI models can’t provide accurate, up-to-date information.

- The real opportunity lies in combining AI and search. A hybrid model merges AI’s usability with search’s transparency, enabling more intuitive, traceable, and ethical knowledge discovery.

“Nobody Searches Anymore” — But Is That True?

Our digital landscape is undergoing a seismic shift as generative AI reshapes how we access information online.

Many have wondered whether we’re looking at the greatest digital battle of the 2020s: Google vs AI.

After all, the writing may be on the wall for the search monopoly. For the first time in a decade, Google’s search market share dropped below 90% at the end of last year — and it’s stayed there.

A Gartner report predicts that traditional search engines will drop in volume by 25% by 2026, losing ground to AI chatbots. And at a Sydney conference this year, Australian Association of National Advertisers Chief Josh Faulks predicted that ChatGPT will surpass Google search within 4 years.

None of this should come as a surprise, considering 61% of Gen Z and 53% of Millennials already prefer AI tools to traditional search engines, according to a recent Vox Media survey.

AI-powered assistants like ChatGPT, Perplexity, and Claude have undeniably changed how we find information, particularly among younger demographics — and who can blame them?

The curated, conversational responses of generative AI often feel more intuitive than sifting through pages of search results. And that’s not to mention the instant gratification that comes with immediate responses.

We see this in the rise of platforms like Perplexity, which blends AI-powered summarisation with source attribution, or the ubiquitous presence of ChatGPT, functioning as a powerful Q&A engine.

But the numbers oversimplify a complex evolution. Classic search engines remain widely used — at least, for now.

A 2025 survey of 1,500 Americans found that nearly 80% of those questioned still prefer Google or Bing for general information queries.

In the UK, an Online Experiences Tracker (OET) survey of user behaviour found that 90% of British adults still visited search engines in May 2024.

So we’re not seeing a wholesale abandonment of search engines. Instead, most people use AI to complement traditional search, not replace it — and that is where the opportunity lies.

Search isn’t dead. But the interface to knowledge gathering is evolving.

What AI Is Good At — And Where It Fails

AI assistants can summarise vast amounts of information, offer creative suggestions, and even manage contextual reasoning that feels remarkably human — tasks far beyond the scope of traditional search.

Need a quick overview of a complex topic? AI can distil it. Looking for a new recipe with specific ingredients? AI can whip one up (though you may want to proceed with caution).

Generative AI thrives in scenarios requiring rapid summarisation or iterative dialogue, such as brainstorming business ideas or translating complex texts.

However, its current limitations are equally pronounced.

Accuracy remains a persistent challenge

“Hallucinations” — fabricated information presented as fact — are a common occurrence. A Cornell University study found that while ChatGPT performs well on straightforward factual questions, it struggles with complex ‘how’ and ‘why’ queries.

Context collapse is rife

Generative search results on Google have had their own issues, generating what Mike Caufield calls context collapse, “where the different use contexts (jokes, movies, recipes, whatever) get blended into a single context”.

In their book Verified, Mike Caufield and Sam Wineburg argue that neither search nor AI can read your mind — they don’t understand the intent behind your search query.

If you type in an ambiguous query — for example, “how many rocks to eat a day” — you might be after a joke, a debate, the lyrics of a song, or, in this case, a famous satirical Onion article.

A traditional search engine will likely present a diverse list of results, covering most of these search intents. From there, it’s essentially a choose-your-own adventure, matching the right result to your intent.

But AI attempts to interpret the question and provide an answer in summary, with a risk of flattening nuance, obscuring debate, amplifying bias, and delivering misguided advice to serve ‘gravel, geodes, or pebbles with each meal’.

Perspectives are narrowed

Search engines serve as democratic equalisers, granting visibility to niche websites and emerging voices.

On the other hand, AI’s tendency to prioritise popular or synthetically generated content risks creating algorithmic monocultures, where homogenised outputs drown out diverse perspectives.

At its best, search offers neutrality. An ethical, well-designed search engine presents multiple perspectives and lets you choose a path. It’s inherently interactive. AI, on the other hand, assumes authority — often without disclosing where that authority comes from.

And that’s the other limitation at play here: source traceability.

Sources and processes are buried

Sources of AI outputs are often opaque, making it difficult to fact-check the information provided.

And perhaps more critically, the underlying mechanisms that make AI produce answers are frequently what we’ve come to call ‘a black box’. Users have little to no insight into how the generative AI arrived at its conclusions.

AI opacity erodes trust

Misformation, hallucinations, and the lack of transparency all undermine user trust.

According to an Ofcom Online Nation 2024 report, the most popular reason for using a generative AI tool was to find information or content, yet just 18% of UK users aged 16 and above found AI search results reliable (the number being only marginally higher for those aged 8–15 years).

These are fundamental limitations for information gathering.

Search Is Still the Backbone of Every “Smart” Answer

One rather important fact is consistently overlooked in all the noise about AI versus search: AI assistants can’t conjure knowledge out of thin air (although that doesn’t stop them from trying).

These models are trained on vast datasets typically scraped from the indexed web — the very domain of traditional search engines.

Behind every AI response lies the infrastructure of search: the crawling and indexing frameworks pioneered by search technologies. Perplexity, for example, continues to rely on a web crawling system, a retrieval engine, and ranking algorithms to produce its responses.

These are the fundamental systems that catalogue news reports, research, blogs, and forums. They’re what makes knowledge discoverable, verifiable, and current.

They’re essential for AI to function effectively since the accuracy of any AI-generated response hinges on the quality and breadth of the underlying search data.

Without a robust and impartial search infrastructure, even the most intelligent language learning models (LLMs) are severely limited and prone to bias.

The search framework is as important as ever. It’s just less visible. AI may be disrupting search’s front-end user experience, but under the hood, it’s fueled by the very thing it’s supposedly replacing.

The Future Isn’t AI or Search — It’s AI and Search

Despite first appearances, the contest between traditional search engines and AI isn’t a zero-sum battle. LLMs and search engines are the perfect partnership in the making.

AI exposes search’s problems with user experience: who wants to sift through dozens of links when you just need a quick answer? Search exposes AI’s reliability issues: what value do direct answers have when you cannot trust their accuracy?

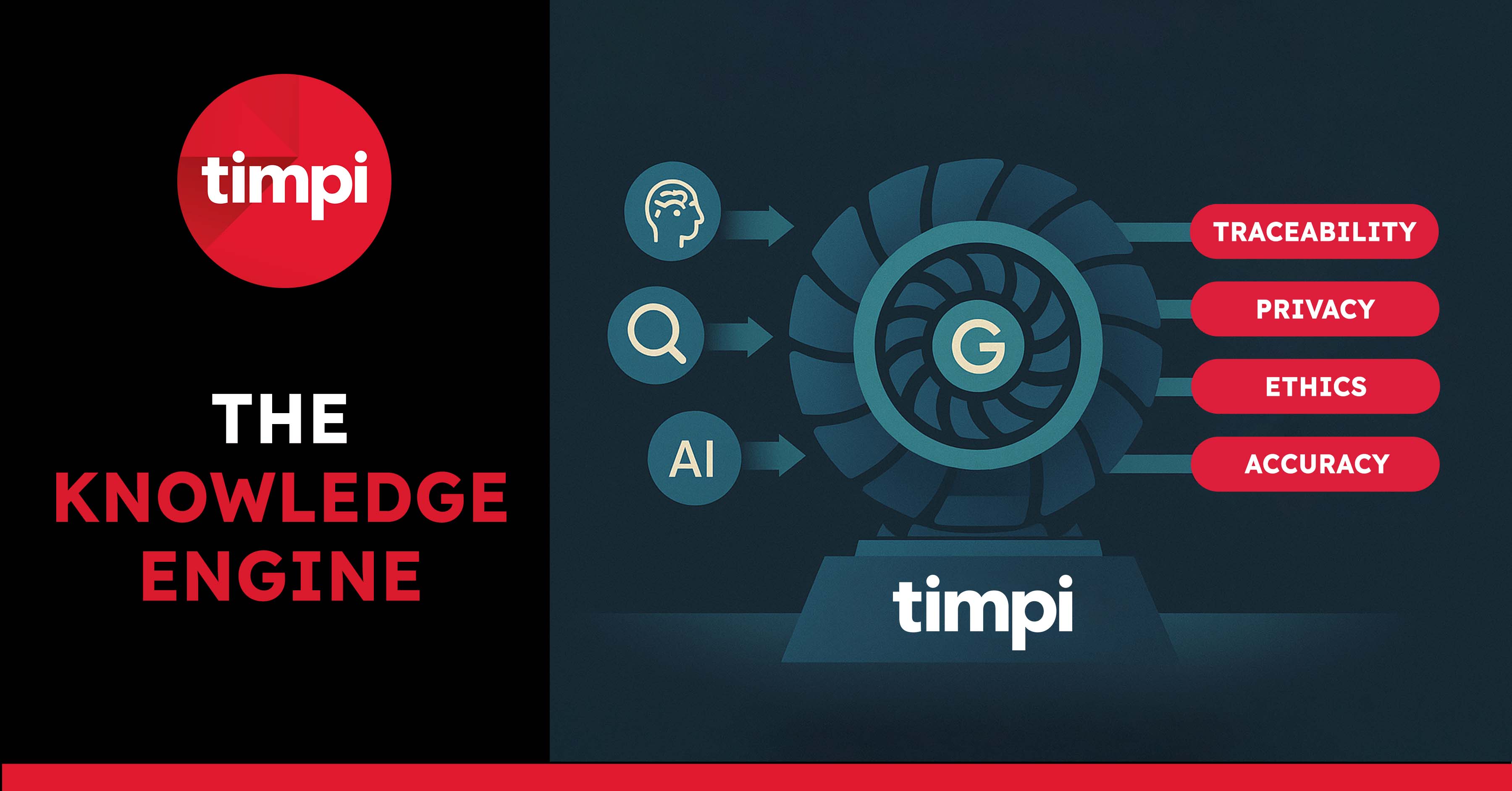

The future of web search is a hybrid system, an ‘answer engine’ that combines AI’s contextual reasoning and natural language interaction with search’s comprehensive coverage and source transparency — all without compromising neutrality or user control.

It’s a new way of searching that feels more like a dialogue than a list of links, yet reveals the source material for traceability and context.

This fusion is already happening in limited ways.

Perplexity cites sources (though often of inconsistent quality). Google’s AI Overviews attempt to synthesise search results (though often poorly, as we’ve seen). Microsoft’s Copilot integrates web search with AI reasoning (though within a surveillance-heavy ecosystem).

AI and search desperately need what the other provides. AI enhances usability. Search ensures traceability. Together, they could redefine how we interact with knowledge.

In this shifting landscape, we have an opportunity to reimagine a new system — an AI-search partnership designed with privacy, ethics, and user agency at its core.

Human-Centred Search in the AI Age

The conversation around AI and search is often framed as a showdown, but at Timpi, we see a crossroads.

One path leads to more of the same: centralised control, opaque algorithms, and surveillance-capitalist models.

The other offers a radical rethink of how we discover and interact with information online — a model grounded in ethical design, decentralisation, and user sovereignty.

We’re not interested in replicating Big Tech’s race to the bottom. Our infrastructure is decentralised by design, built on a growing, independently maintained web index that can crawl 3 billion pages weekly.

This index powers the Timpi search engine, delivering unfiltered, accurate results through a clean, user-first interface free from intrusive ads and hidden agendas.

We also provide real-time data services that respect privacy and promote fair competition, enabling businesses, researchers, and AI developers to tap into unmanipulated, unbiased, and censorship-resistant datasets.

Crucially, in an era where personal data has become a commodity, we refuse to harvest or sell personal data. Instead, we’re out to prove that genuine intelligence doesn’t require intimate knowledge of people’s personal lives.

Our privacy-first approach ensures you can enjoy the benefits of AI-enhanced search without surrendering your digital rights or becoming targets of profiling and manipulation.

It’s not just about building a web index, dataset, or search engine. We’re building a movement to reclaim the internet as a transparent, equitable space where technology amplifies human values, rather than erodes them.

Make the switch to Timpi today for a more transparent and ethical way to search.